Engineered Systems Applications

Risk, Failure & Vulnerability

Probabilistic Risk Assessment (PRA), Failure and Vulnerability Analysis

Engineering risk and failure analysis focuses on predicting the probability of those (presumably rare) failures in an engineered system that can lead to severe damage to the system, injury, loss of life, and/or perhaps damage to the surrounding environment. Vulnerability analysis focuses on identifying (and reducing) the vulnerability of engineered systems to both natural (e.g., weather-related) and man-made (e.g., sabotage, terrorism) disruptions.

These analyses are typically used to inform decisions about required levels of redundancy and other design features, and to evaluate system safety and risk. In these kinds of studies, the output of the analysis is typically the probability of a particular high consequence outcome (e.g., catastrophic failure of the system), and identification of those events or components most likely to lead to that outcome. Based on this information, specific actions can be identified (e.g., design changes or modification of operating procedures) to reduce the risk.

By combining the flexibility of a general-purpose and highly-graphical probabilistic simulation framework with specialized features to support reliability analysis, GoldSim allows you to create quantitative and transparent risk, failure and vulnerability analysis models to allow you to ask "what if" questions regarding various designs and make defensible risk management decisions. GoldSim is flexible and powerful enough to allow you to create a “total system” model that represents the interactions, interdependencies and feedbacks between the various system components (including humans). Without such a model, it may not be possible to identify potential failure mechanisms, fatal flaws or system incompatibilities.

In particular, the GoldSim Reliability Module provides powerful features to support engineering risk and reliability analysis. The Reliability Module can be used to compute the probability of specific consequences (e.g., catastrophic failure of the system). GoldSim catalogs and analyzes failure scenarios, which allows for key sources of unreliability and risk to be identified.

Learn More

Examples

Modules

White Papers

Technical Papers

-

Steel Pipeline Failure Probability Evaluation Based on In-Line Inspection Results

13th Pipeline Technology Conference – March 2018

Maciej Witek, Warsaw University of Technology

The main goal of this paper is to estimate onshore buried pipeline failure probability based on Magnetic Flux Leakage (MFL) inspection data. A code-based engineering approach to estimate the failure pressure was selected as appropriate to be applied directly after in-line inspections, due to the scope of the available data, before any expansive field excavations for direct observations. Det Norske Veritas DNV-RP-F-101 analytical method of burst pressure calculation for a straight pipe was applied. A probabilistic methodology was used to evaluate the severity of part-wall external corrosion defects and their growth over time on gas transmission grid. The Monte Carlo numerical method was selected in this paper for estimation of pipeline failure probability due to the external corrosion with respect to statistical distribution of input parameters. The evaluation of the burst pressure of the pipeline as a function of operation time was computed using reliability software called GoldSim.

-

Operational Safety at U.S. Army Corps of Engineers Dam and Hydropower Facilities

Africa 2017 – Hydropower and Dams – March 2017

Robert Patev, Adiel Komey and Gregory Baecher

The quantification of operational risks at US Army Corps of Engineers (USACE) dam and hydropower projects is a critical piece of the overall USACE risk assessment processes. Operational risks need to be considered for both the daily operations and maintenance of the dam and hydropower systems and for emergency operations required during flood events. Many USACE dam and hydropower projects are multi-purpose and the methodology developed needs to be considered holistically to all operational aspects of the projects. Dams, along with their spillways and other waterways, are built to retain and control the flow of water for purposes of power production, water supply, navigation, recreation, flood risk mitigation, and environmental restoration. This paper will define a system methodology that evaluates the performance of structural, mechanical, electrical controls and sensing equipment over a range of loading conditions that are in combination with human factors such as work environment and stress, internal communication, operator training, and management policies and practices. The result of this system modelling is to identify weaknesses and corrective actions in areas such as corrective maintenance activities, plant staff working environments and level of job training, horizontal and vertical communication with upper management, and operations and maintenance manuals for dam and hydropower projects.

-

A probabilistic framework for comparison of dam breach parameters and outflow hydrograph generated by different empirical prediction methods

Journal of Environmental Modelling and Software, Vol. 86, Pgs. 248–263, 1364-8152/© 2016 Elsevier Ltd. – December 2016

Ebrahim Ahmadisharaf, Alfred J. Kalyanapu, Brantley A. Thames, Jason Lillywhite

This study presents a probabilistic framework to simulate a dam breach and evaluates the impact of using four empirical dam breach prediction methods on breach parameters (i.e., geometry and timing) and outflow hydrograph attributes (i.e., time to peak, hydrograph duration and peak). Mean values and percentiles of breach parameters and outflow hydrograph attributes are compared for hypothetical overtopping failure of Burnett Dam in the state of North Carolina, USA. Furthermore, utilizing the probabilistic framework of GoldSim, the least and most uncertain methods alongside those giving the most critical value are identified for these parameters. The multivariate analysis also indicates that lone use of breach parameters is not necessarily sufficient to characterize outflow hydrograph attributes. However, timing characteristic of the breach is generally a more important driver than its geometric features.

-

Vulnerability Assessment to Support Integrated Water Resources Management of Metropolitan Water Supply Systems

Journal of Water Resources Planning and Management, DOI: 10.1061/(ASCE)WR.1943-5452.0000738. © 2016 American Society of Civil Engineers – January 2016

Erfan Goharian, Steven J. Burian, Jason Lillywhite, and Ryan Hile

The combined actions of natural and human factors change the timing and availability of water resources and, correspondingly, water demand in metropolitan areas. This leads to an imbalance between supply and demand resulting in increased vulnerability of water supply systems. Accordingly, methods for systematic analysis and multifactor assessment are needed to estimate the vulnerability of individual components in an integrated water supply system. This paper introduces a new approach to comprehensively assess vulnerability by integrating water resource system characteristics with factors representing exposure, sensitivity, severity, potential severity, social vulnerability, and adaptive capacity. The effectiveness and advantages of the proposed approach are checked using an investigation of the water supply system of Salt Lake City (SLC), Utah. First, an integrated water resource model was developed using GoldSim to allocate water from different sources in SLC among designated demand points. The model contains individual simulation modules with representative interconnections among the natural hydroclimate system, built water infrastructure, and institutional decision making. The results of the analysis illustrate that basing vulnerability on a sole factor may lead to insufficient understanding and, hence, inefficient management of the system. The new vulnerability index and assessment approach was able to identify the most vulnerable water sources in the SLC integrated water supply system. In conclusion, use of a more comprehensive approach to simulate the system behavior and estimate vulnerability provides more guidance for decision makers to detect vulnerable components of the system and ameliorate decision making.

-

Incorporating Potential Severity into Vulnerability Assessment of Water Supply Systems under Climate Change Conditions

Journal of Water Resources Planning and Management, 2015DOI: 10.1061/ (ASCE)WR.1943-5452.0000579. © 2015 American Society of Civil Engineers. – November 2015

Erfan Goharian, S.M.ASCE; Steven J. Burian, M.ASCE; Courtenay Strong with Univ. of Utah; Tim Bardsley with Western Water Assessment

In response to climate change, vulnerability assessment of water resources systems is typically performed based on quantifying the severity of the failure. This paper introduces an approach to assess vulnerability that incorporates a set of new factors. The method is demonstrated with a case study of a reservoir system in Salt Lake City using an integrated modeling framework composed of a hydrologic model and a systems model driven by temperature and precipitation data for a 30-year historical (1981–2010) period. The climate of the selected future (2036–2065) simulation periods were represented by five combinations of warm or hot, wet or dry, and central tendency projections derived from the World Climate Research Programme's (WCRP's) Coupled Model Intercomparison Project Phase 5. The results of the analysis illustrate that basing vulnerability on severity alone may lead to an incorrect quantification of the system vulnerability. In this study, a typical vulnerability metric (severity) incorrectly provides low magnitudes under the projected future warm-wet climate condition. The proposed new metric correctly indicates the vulnerability to be high because it accounts for additional factors. To further explore the new factors, a sensitivity analysis (SA) was performed to show the impact and importance of the factors on the vulnerability of the system under different climate conditions. The new metric provides a comprehensive representation of system vulnerability under climate change scenarios, which can help decision makers and stakeholders evaluate system operation and infrastructure changes for climate adaptation.

-

Systems Reliability of Flow Control in Dam Safety

12th International Conference on Applications of Statistics and Probability in Civil Engineering, ICASP12 – July 2015

Adiel Komey, Qianli Deng, Gregory Baecher, P. Andy Zielinkski and Tyler Atkinson

The reliable performance of a spillway system depends on the many environmental and operational demand functions placed upon it by basin hydrology, the hydraulic conditions at reservoirs and dams, operating rules for the cascade of reservoirs in the basin, and the vagaries of human and natural factors such as operator interventions or natural disturbances such as ice and floating debris. These systems interact to control floods, condition flows, and filter high frequencies in the river discharge. Their function is to retain water volumes and to pass flows in a controlled way. A systems simulation approach is presented for grappling with these varied influences on flow-control systems in hydropower installations. The river system studied is a series of four power stations in northern Ontario. At the head of the cascade is a seasonally-varying inflow. The remaining three dams downstream have little storage capacity. Each has two vertical lift gates and all four structures have approximately the same spillway capacity. The problem is to conceptualize a systems engineering model for the operation of the dams, spillways, and other components; then to employ the model through stochastic simulation to investigate protocols for the safe operation of the spillway and flow control system.

-

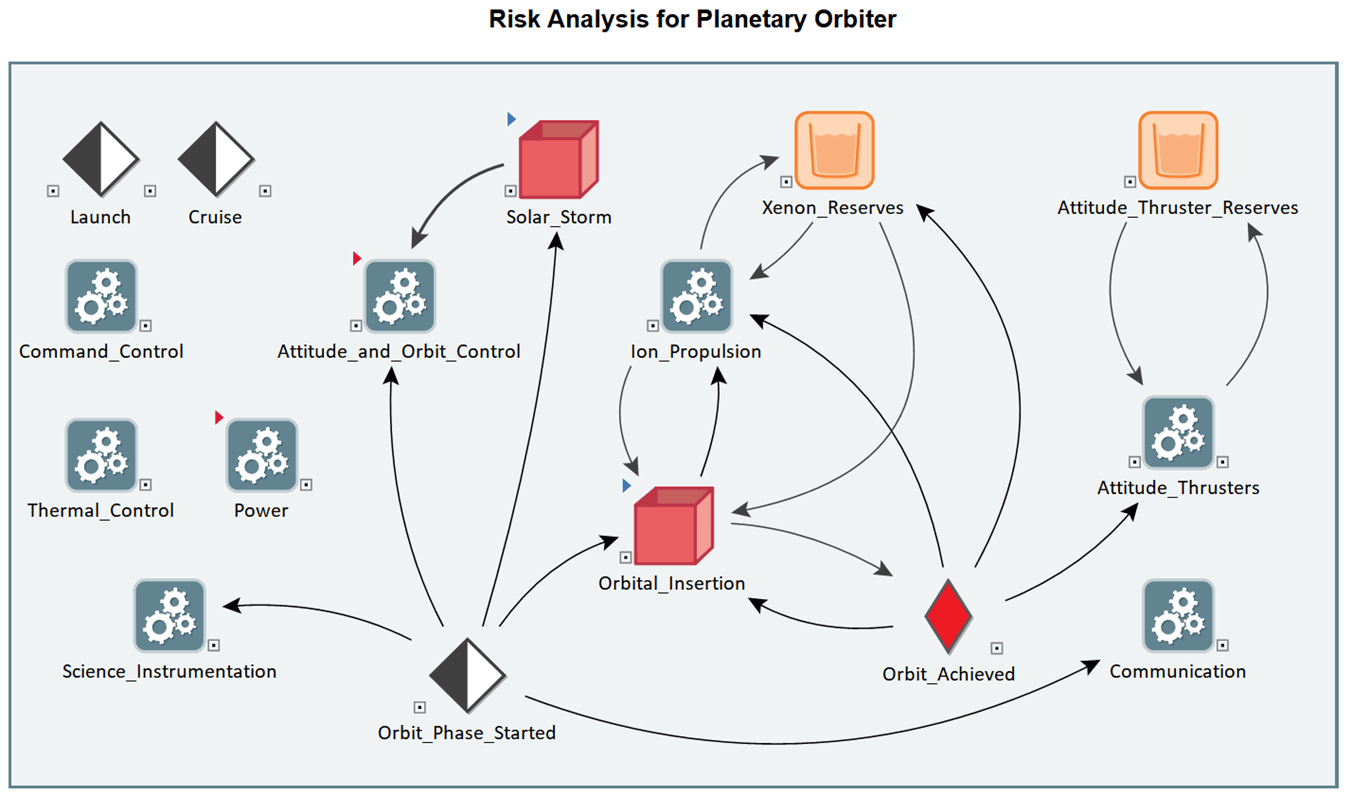

Engineering Risk Assessment of a Dynamic Space Propulsion System Benchmark Problem

Reliability Engineering & System Safety – July 2015

Donovan L. Mathias, Christopher J. Mattenberger, and Susie Go (NASA Ames Research Center)

The Engineering Risk Assessment (ERA) team at NASA Ames Research Center develops dynamic models with linked physics-of-failure analyses to produce quantitative risk assessments of space exploration missions. This paper applies the ERA approach to the 2014 Probabilistic Safety Assessment and Management conference Space Propulsion System Benchmark Problem, which investigates dynamic system risk for a deep space ion propulsion system over three missions with time-varying thruster requirements and operations schedules. The dynamic missions are simulated using commercial software to generate integrated loss-of-mission (LOM) probability results via Monte Carlo sampling. The simulation model successfully captured all dynamics aspects of the benchmark missions, and convergence studies are presented to illustrate the sensitivity of integrated LOM results to the number of Monte Carlo trials. In addition, to evaluate the relative importance of dynamic modeling, the Ames Reliability Tool (ART) was used to build a series of quasi-dynamic, deterministic models that incorporated varying levels of the problem's dynamics. The ART model did a reasonable job of matching the simulation results for the simpler mission case, while auxiliary dynamic models were required to adequately capture risk-driver rankings for the more dynamic cases. This study highlights how state-of-the-art techniques can adapt to a range of dynamic problems.

-

Developing "Flood Loss Curve" for City of Sacramento

ASFPM Conference – June 2015

Md N M Bhuyian, Joseph Thornton, and Alfred Kalyanapu, Tennessee Tech University

The current research presents the development of a "flood loss curve" for the city of Sacramento, California. A flood loss curve is defined as a functional relationship between direct flood damages and flood intensity. This study uses a series of design flood events for the American River to investigate possible damage caused at different flood intensities that the city may experience in future. These scenarios are generated using a Monte Carlo-based hydrograph generator, and are used as inputs for a HEC-RAS model to develop flood intensity parameters including flood inundation extents, depths, velocities and arrival time. These simulated flood parameters are input into HEC-FIA to compute direct damages. Results indicated a positive correlation between losses and flood intensity, reinforcing our flood loss curve concept and its value. This methodology can be used for preliminary vulnerability assessment and ‘back of the envelope’ loss estimates for impending flood events.

-

Investigation of the Impact of Streamflow Temporal Variation on Dam Overtopping Risk: Case Study of a High-Hazard Dam

World Environmental and Water Resources Congress – May 2015

Ebrahim Ahmadisharaf and Alfred J. Kalyanapu, Tennessee Tech University

This study investigates the impact of streamflow temporal variation on annual overtopping risk of Burnett Dam using a risk and reliability analysis approach. Overtopping risk is defined as the probability of reservoir inflow exceeding the spillway capacity. A performance function is used to determine the annual overtopping risk, which has two primary inputs: 1) dam resistance: total spillway capacity; and 2) maximum load: highest inflow discharge. Highest inflow discharge is determined by analysis of the peak streamflow records of a gaging station upstream of the dam. At each year, the highest peak streamflow is routed through the dam upstream channel using a calibrated hydrologic model. Taking the routed inflow, the overtopping risk is determined in each year. Temporal change in the overtopping risk is finally investigated in 1989-2013 period using Mann-Kendall Test.

-

Comparative Analysis of Static and Dynamic Probabilistic Risk Assessment

Journal Article published in the Reliability and Maintainability Symposium (RAMS) – January 2015

Mattenberger, C. NASA Ames Res. Center, Moffett Field, CA, USA; Mathias, D.L.; Go, S.

This study compares and contrasts three different approaches for the probabilistic safety assessment of crewed spacecraft: traditional static fault tree; fault tree hybrid, and dynamic Monte Carlo simulation (using GoldSim).

-

Using GoldSim for Joint Probability Assessment of Closure Times on Linear Infrastructure

Visions to Realities - Stormwater Queensland Conference proceedings, Noosa – June 2014

E. Symons, C. Gimber, Kellogg Brown and Root Pty Ltd

Flooding of major regional roads and rail corridors severely disrupts transport operations including the export of mined minerals from central and north Queensland which contribute heavily to the Australian economy. It is important for proponents developing new infrastructure and operators of existing infrastructure to understand annual closure times resulting from flooding. Long linkages of road or rail that cross a number of catchment basins and a large number of drainage lines can be difficult to assess due to spatial variation, moving storms and concurrent storms. The objective of this paper is to create a simple methodology, using joint probability, to quantitatively assess the closure time along linear infrastructure. For this paper, GoldSim was used to represent the road or rail system.

-

An Integrated Reliability and Physics-based Risk Modeling Approach for Assessing Human Spaceflight Systems

Proceedings, Probabilistic Safety Assessment and Management PSAM 12, Honolulu, HI – June 2014

Susie Go, Donovan L. Mathias, Scott Lawrence, Ken Gee, NASA Ames Research Center and Christopher J. Mattenberger, Science and Technology Corp

This paper presents an integrated reliability and physics-based risk modeling approach for assessing human spaceflight systems. The approach is demonstrated using an example, end-to-end risk assessment of a generic crewed space transportation system during a reference mission to the International Space Station. The behavior of the system is modeled using analysis techniques from multiple disciplines in order to properly capture the dynamic time- and state- dependent consequences of failures encountered in different mission phases. This approach facilitates risk-informed design by providing more realistic representation of system failures and interactions; identifying key risk-driving sensitivities, dependencies, and assumptions; and tracking multiple figures of merit within a single, responsive assessment framework that can readily incorporate evolving design information throughout system development.

-

Engineering Risk Assessment of Space Thruster Challenge Problem

Proceedings, Probabilistic Safety Assessment and Management PSAM 12, Honolulu, HI – June 2014

Donovan L. Mathias, Susie Go, NASA Ames Research Center and Christopher J. Mattenberger, Science and Technology Corp.

Quantitative risk assessments of space exploration missions were developed by the Engineering Risk Assessment (ERA) team at NASA Ames Research Center, which uses GoldSim's discrete and continuous-time reliability elements. The model applies the ERA approach to the baseline and extended versions of the PSAM Space Thruster Challenge Problem, which investigates mission risk for a deep space ion propulsion system with time-varying thruster requirements and operations schedules. This study highlighted that state-of-the-art techniques can adequately adapt to a range of dynamic problems.

-

Comparison of Uncertainty and Sensitivity Analyses Methods Under Different Noise Levels

Presentation, PSAM12: Probabilistic Safety Assessment & Management Conference – June 2014

David Esh and Christopher Grossman, US Nuclear Regulatory Commission

Uncertainty and sensitivity analyses are an integral part of probabilistic assessment methods used to evaluate the safety of a variety of different systems. In many cases the systems are complex, information is sparse, and resources are limited. Models are used to represent and analyze the systems. To incorporate uncertainty, the developed models are commonly probabilistic. Uncertainty and sensitivity analyses are used to focus iterative model development activities, facilitate regulatory review of the model, and enhance interpretation of the model results. A large variety of uncertainty and sensitivity analyses techniques have been developed as modeling has advanced and become more prevalent. This paper compares the practical performance of six different uncertainty and sensitivity analyses techniques over ten different test functions under different noise levels. In addition, insights from two real-world examples are developed.

-

Impact of Spatial Resolution on Downstream Flood Hazard Due to Dam Break Events using Probabilistic Flood Modeling

5th Dam Safety Conference – September 2013

Ebrahim Ahmadisharaf, Md Nowfel Mahmud Bhuyian, and Alfred Kalyanapu, Tennessee Tech University

The objective of this study is to address the impact of spatial resolution on the relative accuracy of downstream flood hazard after a dam break event. It is hypothesized that higher spatial resolution will significantly increase the model predictive ability and the accuracy of flood hazard maps. The current study employs a two-dimensional (2D) flood model, Flood2D-GPU in a probabilistic framework to investigate these spatial resolution impacts by applying dam break simulations on Burnett Dam near Asheville, NC. The dam break hydrograph is chosen as the uncertain parameter as it adds greater source of uncertainties in most situations. Using GoldSim, Monte Carlo modeling software, 99 stochastic dam break hydrographs representing various possible scenarios are generated. These hydrographs are input into Flood2D-GPU to produce probability weighted flood hazard maps. The probabilistic simulations are carried out for 9m, 31m, 46m, 62m and 93m spatial resolutions. The outcomes of this study will assist dam break modelers to enhance their Emergency Action Plans by providing recommendations for suitable spatial resolution and to avoid increased modeling time.

-

GoldSim's Dynamic-Link Library (DLL) Interface for Cementitious Barriers Partnership (CBP)

WM2011 Proceedings – March 2011

Kevin G. Brown, Vanderbilt University; Frank Smith and Gregory Flach, Savannah River National Laboratory

The Cementitious Barriers Partnership (CBP) Project is a multi-disciplinary, multi-institutional collaboration supported by the United States Department of Energy (US DOE) Office of Waste Processing. The objective of the CBP project is to develop a set of tools to improve understanding and prediction of the long-term structural, hydraulic, and chemical performance of cementitious barriers used in nuclear applications. The project is focused on reducing the uncertainties of current methodologies for assessing cementitious barrier performance and increasing the consistency and transparency of the assessment process. To better characterize the uncertainties in the models used to predict barrier performance, GoldSim is used as a probabilistic framework with interfaces to external codes for specific calculations. A general dynamic-link library (DLL) interface has been developed to link GoldSim with external codes. The DLL that performs the linking function is designed to take a list of code inputs from GoldSim, create an input file for the external application, run the external code, and return a list of outputs, read from files created by the external application, back to GoldSim for analysis. Although currently used by CBP, the DLL is generic and can be used for a wide variety of external codes that need to be examined probabilistically. Use of the DLL to couple external codes to GoldSim helps enable improved risk-informed, performance-based decision-making and supports several of the strategic initiatives in the DOE Office of Environmental Management Engineering & Technology Roadmap.

-

Uncertainty Analysis for Unprotected Loss-of-Heat-Sink, Loss-of-Flow, and Transient-Overpower Events in Sodium-Cooled Fast Reactors

International Conference on Fast Reactors and Related Fuel Cycles (FR 2009), Kyoto, Japan – December 2009

Morris, E. E. and Nutt, W. M., Argonne National Laboratory

While the traditional approach to reactor safety analyses remain deterministic, this paper considers a stochastic approach for explicitly including uncertainty in safety parameters by applying Monte Carlo sampling coupled with established deterministic reactor safety analysis tools.

-

A System Model for Geologic Sequestration of Carbon Dioxide

Article in Environmental Science and Technology, Volume 43, Number 3, pgs. 565-570 – December 2008

Philip Stauffer, Hari Viswanathan, Rajesh Pawar and George Guthrie, Los Alamos National Laboratory

This article describes the CO2-PENS model developed to simulate capture, transport and injection in different geological reservoirs.

Overview: Poster from the 2007 GoldSim User Conference

-

Simulation Assisted Risk Assessment Applied to Launch Vehicle Conceptual Design

Reliability and Maintainability Symposium – January 2008

Donovan L. Mathias, Susie Go, Ken Gee, and Scott Lawrence, NASA Ames Research Center

This paper describes the application of simulation-based risk assessment to the analysis of abort during the ascent phase of a space exploration mission.

-

Risk Assessment for Unbound Granular Material Performance in Rural Queensland Pavements

Master's Thesis – January 2006

Meera Creagh, University of Queensland

This thesis describes the use of GoldSim to evaluate different material choices for road-building projects.

-

Predicting Risks in the Earth Sciences: Volcanological Examples

Los Alamos Science, Number 29, pgs. 56-69 – January 2005

Greg Valentine, Los Alamos National Laboratory

This article describes the process of volcanological risk assessment, including describing how this is modeled, using GoldSim, within the Yucca Mountain Total System Performance Assessment.

-

Development of a Dynamic Simulation Approach to Mission Risk and Reliability Analysis

American Nuclear Society International Topical Meeting on Probabilistic Safety Analysis, PSA 05 – January 2005

Ian Miller and Andrew Burns

This paper describes a NASA-funded project to develop a reliability analysis module for the GoldSim simulation software capable of modeling highly dynamic systems over the duration of the mission, taking into account variation in input parameters and the evolution of the system. To illustrate the approach, two NASA examples that have previously been evaluated using classical PRA approaches were developed using the simulation-based approach. Issues surrounding the translation of the classical PRA models into a simulation-based approach are discussed, and areas where the simulation-based approach provided additional insights into the system behavior are highlighted.